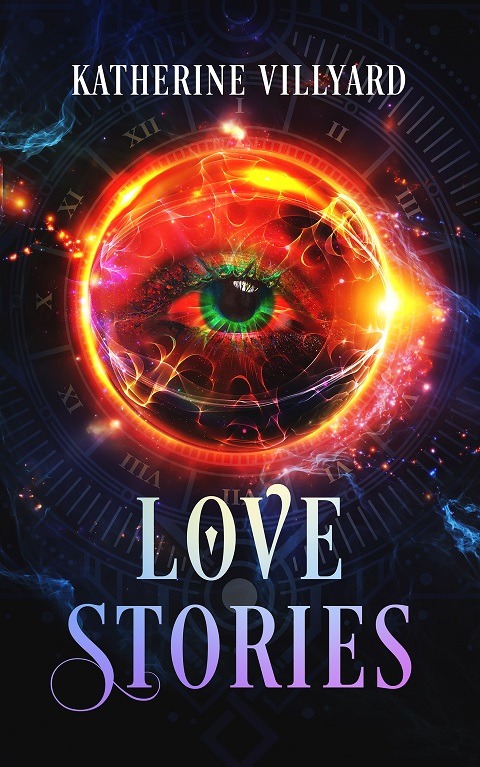

By Katherine Villyard

Love Stories

a short fiction collection

What is love? What can love drive us to do?

In these stories, love pushes us to revenge, to reach beyond the boundaries of life and death. To rescue a family member. To fight the good fight without even knowing it, or to risk everyone and everything to save someone who may or may not be worthy. To make the wrong decisions and walk into hell. To transcend who we are.

Love can make us both the best and worst versions of ourselves.

Author

Katherine Villyard

Published Books

Published Short Stories

years in SFWA

Other Publications

Broad Spectrum; The 2012 Broad Universe Fiction Sampler

Twenty-nine excerpts and complete short stories from members of Broad Universe, an organization promoting women in genre fiction: fantasy, horror and science fiction.

Fantastic Stories of the Imagination,January 2015 #224

Fantastic Stories of the Imagination, edited by Hugo and Wold Fantasy Award nominated editor Warren Lapine, brings you the very best in science fiction and fantasy. Join us as we explore strange new worlds.

Alien Abduction; Collected Short Fiction

Herein you’ll find thought-provoking, wondrous, hopeful, frightening, and deeply human tales on the themes of alien and abduction. Such stories have been with us far longer than most realize, perhaps for thousands of years, challenging humankind’s very position in the cosmos.

Katherine Villyard’s LOVE STORIES blazes across space and time and includes an array of futuristic short stories linked by the theme of love and the drama it can provoke. The stories, populated by mortals, Gods, wizards and androids, vary in time and their fantastical settings. Each blends plot and character into an imaginative array that will dazzle readers.

– indiereader

Upcoming Events

August 8 – 12, 2024

WorldCon • Glasgow, Scotland

The World Science Fiction Convention (Worldcon) is an annual international gathering of science fiction and fantasy fans, writers, artists, musicians, and creators. First held in New York City in 1939, Worldcon visits a new city around the globe each year and is organised by a different group of dedicated volunteers who participate in a bidding process that is open to any group that meets a small number of technical regulations.

The longest running Science Fiction convention in the world, Worldcon originally focused on only Science Fiction and Fantasy. While both remain important, Worldcon continues to grow with each year of its celebration; now including genre television, films, animation, role-playing and table-top gaming, video games and other popular media as well. Since the mid-1970s, Worldcons have had an average attendance of 4,000-5,000 fans, with more or less depending on the host city.

Newsletter Exclusive!

Available now

Becoming

Short Fiction

In this short fiction teaser for my novel in progress, an innocent young man must face an ancient evil—including an evil that has taken root inside him—and learn who he really is.

My Writing Blog

Follow Along

I <3 UK

So, apparently? I am also number 1 in the UK!

I’M SCREAMING!!!!!!!!!!

I'm number one in Canada!!!! I love you, Canada! <3 [Edit on 3/21/24] I should have checked Kobo yesterday, but today I'm #3 there! [/edit] (Amazon swears I'll have a sales rank in the UK tomorrow, but I currently don't have one.)

Bookbub Baby!

I have an international-only Bookbub deal TODAY.